A Preliminary Look at the State of Gender Disaggregated Aid Data

We’ve recently begun looking into what role, if any, transparency could play in the push for more or better gender equality. Although we’re still in the early stages, along the way we’ve made some pretty interesting discoveries and wanted to share what we have learned so far.

The data is needed

The need for gender disaggregated aid data has become progressively more evident over the past decade. Throughout our conversations, implementing agencies have expressed the need for a variety of data ranging from the typical – forward looking data on where donors are looking to work – to more specific, often results-based, disaggregated data which extends beyond simple ratios between male and female and includes more detailed, intersectional data. Intersectional data could include information such as income, age, race or ethnic background, disability status, rural/urban populations. This kind of data would provide a much clearer picture of the needs of women and men, as well as their interaction with development projects. Without this kind knowledge, agencies are basing their projects on only partial information.

What data is already out there?

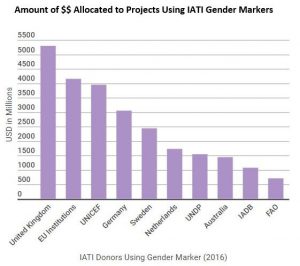

Before asking for more data, we need to consider what is already available. Although IATI introduced a gender marker in 2013, there’s been little uptake. Despite there being over 26,900 active projects “tagged” as being principally about gender equality, its notable that some of the largest donors in the world, such as the United States, France, and Italy aren’t using it.

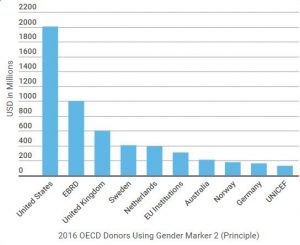

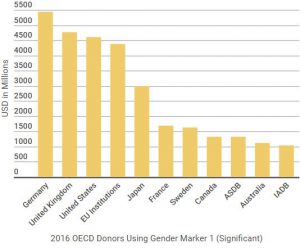

Further, the OECD-DAC introduced three levels of a gender equality policy marker in 2016: “significant”, “principle” or “not targeted”. From this data we can see that, in 2016, $4.2 billion dollars, or just 1.7% of the total $248 billion of worldwide ODA, had gender equality as its principle objective. Meanwhile, a further $33 billion had gender equality as a significant objective, or 13.3% of total ODA. However, while this data is unquestionably useful in tracking the overall funding to gender equality related programs, it is both back-dated and has a limited ability to give a full picture of what is actually happening on the ground. Implementing agencies usually collect the majority of this data themselves, as well as relying on other organisations in the field, and donors, for their data.

There is limited sharing of data

This lack of formalised mechanisms for sharing data was expressed by several people we spoke with. While they all agreed that there was a generally positive attitude between organisations, which made sharing data informally possible, there was also agreement that the lack of formal sharing mechanism was a hindrance, as individuals need to know who to contact. Some concerns were expressed that such informal processes inadvertently exclude some local actors.

Additionally, while this data may be casually shared among organisations, it is rarely published in its most granular forms. Some form of gender disaggregated data is usually included in midterm, final and donor reports published by implementers. However, these published data sets are usually incomplete and are not housed together in a central repository. In order to encourage data use, data should be easily and quickly accessible. Interviewees mentioned specifically that this was not the case, and that they had to invest significant time and energy gathering data and discerning its utility before being able to use it, which became a significant hindrance to data use.

Priority areas

One of the major problems arising from patchy data-sharing is that there is no common understanding of what constitutes ‘good’ gender data.

Many of the people we spoke to expressed frustration about the quality of the gender disaggregated data being collected. At the most basic level, gender disaggregated data could simply be counting the number of women and men who participated in a project. We’re told that this information is incapable of providing a clear, nuanced picture of this project. However, there is no benchmark for what level of data would provide such a picture. One implementer in particular noted that now was the time for a serious rethink of ”what is gender data, what is good gender data, and what gender data do we need to make sure we are gathering?”

No comparison = no results

All of these problems come to a head around comparison. If there is no standardisation of priority areas, and no formal sharing mechanisms, it then becomes difficult to accurately compare baseline, progress and results between projects or organisations. Throughout our conversations, the uneven nature of data sharing was brought up as a serious hindrance on project progress. In one case, this uneven landscape resulted in one implementer having to fundamentally shift the direction of a project in year three because it turned out another organisation was conducting similar work in the same area. While this is the result of the larger problem of organisational coordination, it is a particular problem in projects targeting gender inequality. Given the amount of energy and focus going in to these types of projects, having no standard for good data collection, and therefore limited ability to compare data, will inevitably result in potentially significant overlaps and gaps in gender work.

As you can see, the field of gender disaggregated data is a busy one. There are lots of people and organisations working in this area, at all levels of development. Inevitably, these all come with different understandings and opinions about the best ways to proceed. At Publish What You Fund, we’ve tried to gather as much information as possible, in order to form some general observations about what’s happening, what’s working, and what might be missing in this field. Needless to say, there is much more to be said about gender disaggregated data, and this is just a short start.